The internet is filled with data that needs to be extracted through web scraping. And the more knowledge you have, the easier this exercise will be and the more data you will have at your disposal.

Data is crucial to everything we do today and even more crucial for business owners. Multiple tools can help you gather data. But while some tools are simple and effective, others are complicated and cost too much to use or maintain.

This has led many business owners to research the best tools to use for web scraping. Surely, the best ones need to be open-source as this denotes they would be less expensive with a huge community backing them for easy support for anyone who gets stranded.

But we all know that not all open-source tools are the same. Today, we dive into the best open-source tools to use for your next web scraping project.

Contents

Why You Need the Right Library for Web Scraping

Web scraping is essentially the process of using high-level machines to interact with multiple sources and extract what data they contain.

The machines often include proxies and scrapings but must be software that works automatically and with as little human input as possible.

This is to cut away the monotony involved in performing repetitive tasks and ensure the data is harvested quickly in real-time and with as little error as possible.

There are several reasons why it is advisable to use only the best libraries for this exercise, and the following are some of the most common reasons:

1. The Right Library Is Cost-Effective

While web scraping is essential, it is unwise to put in so much that the rest of your business starts to suffer from underfunding.

Using the right library cuts the cost of gathering data and helps you keep the web scraping funding at a minimum.

2. The Right Library Provides An All-Round System

Web scraping is often defined as a process, but even this process is further broken down into smaller processes that work together to collect millions of data frequently.

Using the right library, you can send out requests, clear restrictions, interact with the target servers, harvest data, and parse it to an available storage unit all in one sweep.

3. It Guarantees Speed

Web scraping is an intensive task that is done very often. And to boost data accuracy, the process needs to be carried out as quickly as possible.

While not all libraries promise such speed, the best libraries will help ensure faster internet connection and the quick transfer of the data back and forth.

The faster this happens, the higher the library tools are rated.

4. It Works Automatically

We have mentioned how web scraping can be monotonous, taking too much energy and time when done manually. Harvesting millions of data repeatedly every day can be overwhelming and take a toll on even the best of us.

This is why web scraping needs to be automated, and the best tools ensure the process isn’t only automated but also smooth.

That way, you can enter a command once and watch the tools do the rest. And the more knowledge you have like any Atlanta web design company, the easier this exercise will be and the more data you will have for disposal.

5. The Right Library Needs Minimal Maintenance

The cost of owning a tool spans from how much you purchased it to how much it costs to maintain it and keep it in great working conditions.

Some tools need to be maintained very often and at a very high cost, while the right libraries need little or no maintenance at all and only sparingly when maintenance is needed.

Most Popular Libraries Used For Web Scraping

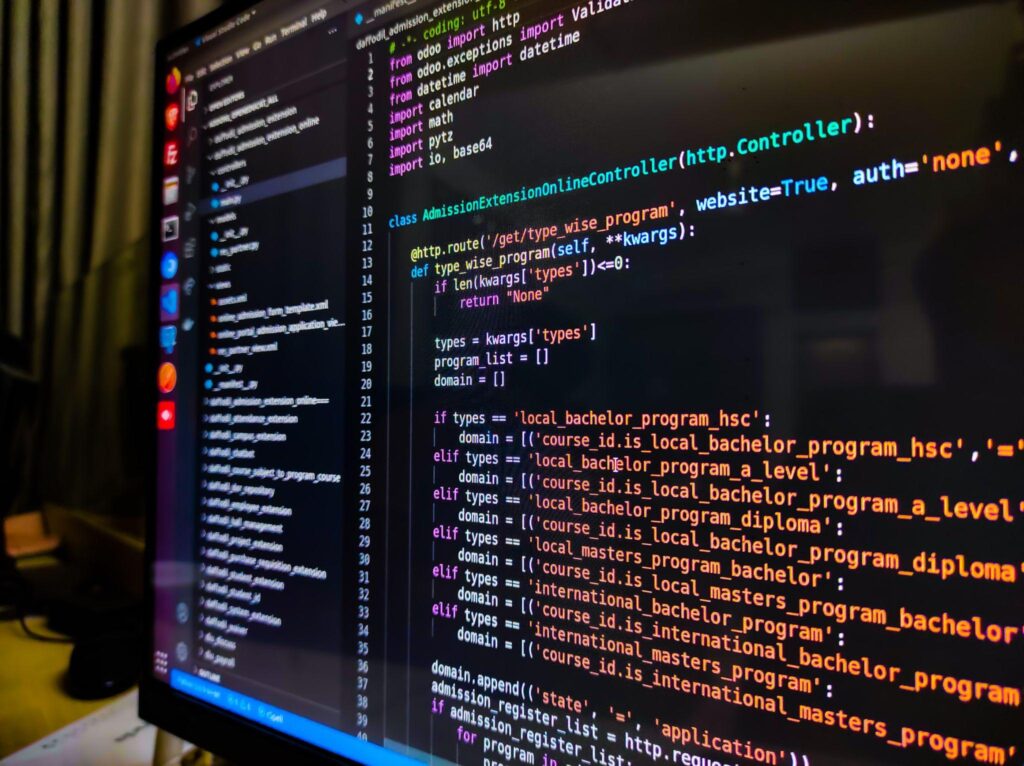

We choose Python as our best web scraping language because it is common, easy to use, and cost-effective.

Hence, we will describe some Python libraries as the best libraries for web scraping tasks.

This is the most recognized Python library used for web scraping. It is easy to learn and use and can be used by anyone, including beginners.

It can automatically convert extracted documents to Unicode or UTF-8 format and is used mostly to create a parse tree to allow for easy parsing of HTML and XML files. This is why it is often used in combination with the LXML library.

Its major advantages include that it requires only a few lines of code to work. It is easy to use, has extensive documentation, is very robust, and comes with automatic encoding detection.

However, it has one disadvantage: it is slower than the LXML library.

2. Selenium Library

Some Python libraries have limitations in that they can only scrape from static websites and seem to struggle once the website has dynamic features.

But this is not so with the Selenium library. The library can easily scrape data from any website, whether it stands still or changes periodically.

It has the following advantages; it performs most tasks done by humans, automates every activity, including web scraping, and scrapes from any website.

Its major disadvantages would include being slow and difficult to set up, requiring a large storage system, and being unsuitable for large-scale operations.

3. LXML Library

This library is a high-performing and fast tool used for delivering high-quality HTML and XML files.

This library is well known for its uniqueness which it gets by combining the power and speed of Element trees with the simplicity of Python and can be learned by taking an LXML tutorial. Read this recent article by Oxylabs to learn how to scrape public data with LXML.

It is commonly used to extract large datasets because of the above qualities.

Its major merits would include the following; it is one of the fastest parsers in the market, it is very light-weight and combines Element trees and Python API features.

The known disadvantages would include how it may be unsuitable for poorly designed HTML files and how it may not be beginner-friendly.

Conclusion

The best web scraping libraries are those that get the job done in the cheapest and easiest ways possible.

We have highlighted the Python libraries above because we believe they qualify to be called one of the best web scraping libraries in the market today.

More Stories

5 Reasons to Employ Monitoring Software in The Classroom

Navigating the Digital Wild West: How ByteSnipers Fortifies Your Bremen Business

Demystifying the Different Types of Network Access Control Methods